The main purpose of Week 3’s class was to become better critical thinkers – not just to critique other people’s ideas, but more importantly, to critique our own. As is the new normal, Producer Vic and I chatted about it afterwards, and recorded our conversation as a podcast – see the bottom of this blog post for the details of the audio and video versions. You can find the slideshow from my lecture here on Slideshare. In case you want to take a look at the reading list, here it is again: GLBL252 Courage Syllabus.

What does critical thinking have to do with courage? It is explained very well by a mysterious source that I found online, Thinking Tools to Improve Your Life on the Westside Toastmasters website. More or less an online book, with a multitude of thought-provoking insights and exercises, I found it a very useful exposition of how to bring extra rigour to our thinking.

(As an aside, I tried to find out who the original author was by emailing Westside Toastmasters, and received this tantalising response:

“That content has been on our group’s website for a number of years. Our group’s membership turns over at a fairly fast clip each year so the institutional memory is not deep and there were no obvious indications as to the author of the content, unfortunately.”

So if you’re out there, Enigmatic Author, thank you for your wisdom, and please get in touch!)

Fair-Minded Thinking

The site says:

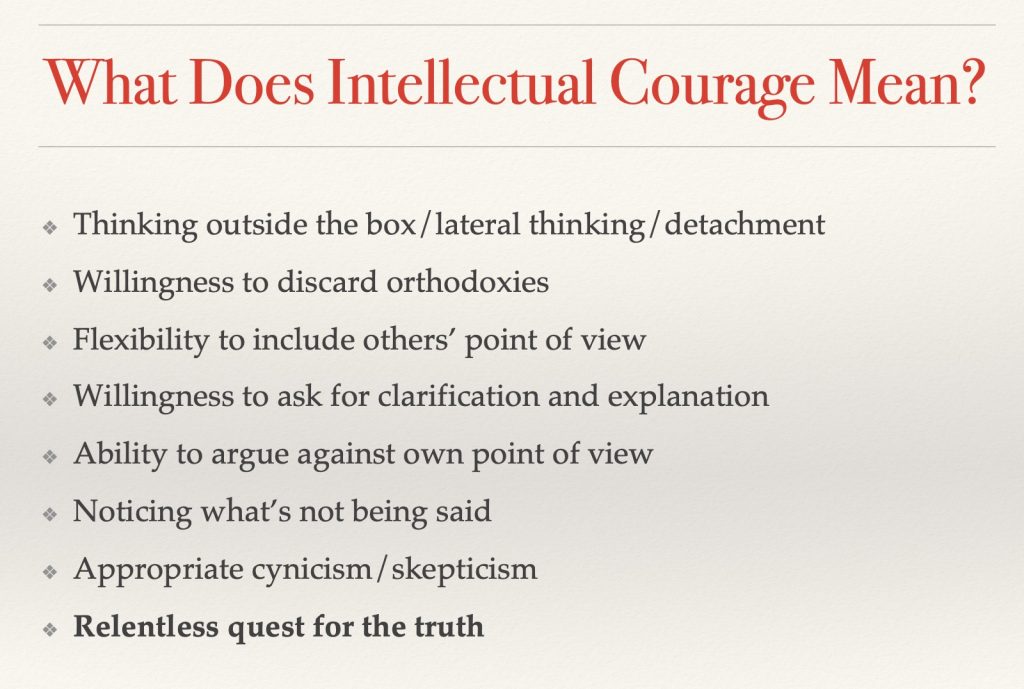

“Intellectual courage may be defined as having a consciousness of the need to face and fairly address ideas, beliefs or viewpoints toward which one has strong negative emotions and to which one has not given a serious hearing. Intellectual courage is connected to the recognition that ideas that society considers dangerous or absurd are sometimes rationally justified (in whole or in part). Conclusions and beliefs inculcated in people are sometimes false or misleading. To determine for oneself what makes sense, one must not passively and uncritically accept what one has learned. Intellectual courage comes into play here because there is some truth in some ideas considered dangerous and absurd, and distortion or falsity in some ideas held strongly by social groups to which we belong. People need courage to be fair-minded thinkers in these circumstances. The penalties for nonconformity can be severe.”

These social penalties have been very much in evidence on both sides of the Atlantic in recent times, as Brexit and the US election have polarised voters, and dogmatic positions have been adopted by many on both sides. Developing this ability to have rational, mature, open conversations about issues that have become political hot potatoes is going to be essential if we are to heal these deep divides – if that is even possible within this generation. Sticking to a win/lose, I’m-right/you’re-wrong position is going to get us nowhere but further divided.

If you take a look at this week’s slideshow, you’ll see that I set out a whole range of cognitive errors that are exploited by advertisers, politicians, salesmen, negotiators, lawyers, and anybody who is trying to persuade us to a different point of view.

Confirmation Bias

If you value truth and clarity, these cognitive biases are all worth keeping in mind, but Confirmation Bias is what I want to particularly focus on in this blog post, because above all, this is what creates rigidity, dogma, and division.

We are not blank slates. None of us is totally objective, much as we like to think we are. In this week’s reading about public intellectuals, it was interesting to note that some writers believe that we need these bastions of neutrality. But can there ever truly be such a thing? By the time we are mature enough to be regarded as an intellectual, we have inevitably acquired a few opinions along the way, and to pretend otherwise is disingenuous.

(This is not to say that we don’t need intellectuals who are willing to speak out on matters of public policy – we most definitely do, as we’re in danger of celebrities moving into the current vacuum – but I don’t think it’s realistic to pretend that they don’t have personal perspectives.)

According to Thinking Tools to Improve Your Life, these are the main factors that influence our worldview:

- Vocational – work environment

- Sociological – social groups

- Philosophical – our personal philosophy

- Ethical – our obligations and how important we deem them

- Intellectual – our ideas, how we deal with abstracts

- Anthropological – cultural practices and taboos

- Ideological and political – structure of power, interest groups

- Economic – our economic conditions

- Historical – our history and how we tell it

- Biological – our biology and neurology

- Theological – religious beliefs and attitudes

- Psychological – our personality and psychology

- Physiological – physical condition, stature, and weight

- Parental – what examples did our most influential role models set for us? (I added this one)

If each of these factors is like a piece of card, we are a pin that binds them together, and they fan out around us. We are a unique combination of our individual positions on each of these planes. And we bring these preconceptions to every decision we make.

(Think you’re unbiased? Think again. Try the Implicit Association Test (18 million people already have). And prepare to be surprised.)

Confirmation bias shows up in so many ways. Here are my Top Three.

Self-Limiting Beliefs

I was listening to an interview with Mahzarin Banaji, author of Blindspot: Hidden Biases of Good People and co-creator of the Implicit Association Test. She mentioned that, in order to overcome implicit bias in recruitment, one organisation chose to supplement the usual process of inviting applications with asking people working in that field which of their colleagues they most respected. They found that the individuals most often recommended had often not submitted applications – usually because they didn’t think they were ready, or that they “weren’t the kind of person” that would be selected.

So we are often victims of our own biases about what kind of person would be hired for a certain kind of role. Lack of diversity is most definitely a problem, but the fault may not lie entirely with the employers and recruiters. Sometimes those who would represent “diversity” don’t put themselves forward because of their assumptions about the lack of diversity, and it becomes self-fulfilling.

We also know that people will edit, distort, and delete what they perceive in order to reinforce what they have already decided about themselves. Some research suggests that individuals with high social anxiety are more likely to misinterpret vague or neutral social cues (e.g., facial expressions) as negative.

Further, our self-limiting biases can be contagious. Whether we believe ourselves to be confident, shy, attractive, unattractive, or whatever, that is how we will project ourselves, and that is how other people will perceive us, creating a feedback loop that verifies what we have already decided about ourselves.

The Echo Chamber

Modern society has given us the option to surround ourselves with people who look like us, think like us, agree with us. In the madding crowds of city life, we can seek out kindred spirits and avoid the rest. Google learns what kind of content we like, and gives us more of the same, creating the illusion that the whole world thinks the same way as we do. Facebook plays on our prurience by promoting the most sensational content over the most accurate or edifying – its goal is not to inform us, but to keep us online for long enough to fall for the clickbait and generate revenue for Facebook. And with a myriad of news outlets, we can choose the one that best resonates with our pre-existing worldview.

This chart has been controversial (but of course), and you can disagree with the details, but the overall point is that the media are not neutral – like all human beings, journalists and editors have opinions. And we are likely to gravitate towards the opinions that resonate most closely with our own. It would be very interesting to read a publication from the opposite end of the political spectrum, not to denigrate or mock it, but to genuinely see what valuable insights it might yield.

Relationships (and Arguments)

Raise your hand if you have never had a disagreement – with a relative, a friend, a spouse, the local planning officer, a politician, or the manager of your football team on the TV screen. I am assuming that there are very few hands in the air at the moment, as I don’t suppose I have that many Buddhist monks living alone in caves reading my blog.

And of course, during that argument, you believed you were right, right? Maybe you still do. But so did the person you were arguing with.

How did the argument end? Did one of you say, “Ah, now I see the error of my ways! You are correct, and I am wrong.”

Probably not.

And how did that leave you feeling? Maybe upset, resentful, angry, critical of the other person for being such an idiot. Chances are, they felt the same.

When you step back from the immediate situation, can you see why they might have a different view than you do? Could it be that, rather than stemming from some deep character flaw, your difference of opinion is due to one of the factors listed above? If you had been subjected to the same influences that they have, is it possible that you might see things the same way that they do?

When you step back from the immediate situation, can you see why they might have a different view than you do? Could it be that, rather than stemming from some deep character flaw, your difference of opinion is due to one of the factors listed above? If you had been subjected to the same influences that they have, is it possible that you might see things the same way that they do?

You’ve probably also noticed that facts don’t win an argument. If somebody has already made up their mind, they will find ways to rationalise, discount, or discredit any facts that you summon to support your case. We tend to make decisions with our emotions, and then use our brains to create a post facto story about why we’re right. So unless you’re arguing with the emotionless Mr Spock, you’re unlikely to win the day by appealing purely to rationality, as your opponent will doubtless have a very different view than you do as to what constitutes a rational point of view.

We all have our blind spots. Even you, yes, you. Even me. A few years ago I took an Insights psychometric test administered by my cousin, a qualified practitioner. He suggested I go through the resulting report and highlight any sections that I wanted to discuss, especially any areas that I disagreed with. There was a rash of highlights in one particular section…. Until I noticed that the section was entitled “Possible Blind Spots”. Ah.

As a different spin on this, if you have made up your mind that somebody is a liar, or lazy, or untrustworthy, or a spendthrift, or disorganised, or bad at timekeeping, you will notice only the evidence that proves you right, and ignore evidence to the contrary.

And, of course, confirmation bias can work to the good as well. Have you heard the one about the teacher who complained that she was tired of teaching low-achieving students and asked the head if she could have a higher-calibre class? The next term she was delighted to see her list of students and their high IQ scores. She told her class how happy she was to be teaching the brightest and best. She had a wonderful term, and her students flourished. At the end of the term she thanked the head for granting her wish. “Oh”, he said. “Those weren’t their IQ scores. Those were their locker numbers.”

We see what we expect to see.

Conclusion

I feel that there has never been a more important time for us to recognise that not everybody shares our worldview. And this is not to be meek and mild and woolly-waffly-uber-liberal and say that all worldviews are equally valid. Some worldviews are extremely harmful and dangerous, and we must courageously stand up to those who seek to impose them.

The point here is that humans are (on the whole, but not invariably) rational creatures. We believe things for a reason – because we have been brought up that way, because we are motivated by money to think a certain way, or whatever. When you attack somebody’s ideas, you attack their identity, and they will resist – often fiercely. The more you attack, the fiercer the resistance becomes.

Courage does not have to mean confrontation. Courage can mean having the intellectual capacity to step temporarily outside of your own worldview and do your best to understand why your opponent thinks the way they do. This may take you into scary perspectives, which is why it is not for the faint-hearted. Then, once you’re in their headspace, you can understand what they’re afraid of, what they want, what they’re fighting for and against. And from there, and only from there, can you find better ways to address their needs, ways that don’t ride roughshod over the rights of others to have their needs met.

It’s my personal belief that intellectual courage is exactly what we need right now. It is not soft, but steely. It requires mental agility, flexibility, and great personal integrity. And I’m willing to concede that it may not work. But I believe it has a better chance than dogma, division, and self-righteousness.

Exercise in Intellectual Empathy

(courtesy of Westside Toastmasters)

Try to reconstruct the last argument you had with somebody (a supervisor, colleague, friend, or intimate other). Reconstruct the argument from your perspective and that of the other person. Complete the statements below. As you do, watch that you do not distort the other’s viewpoint. Try to enter into it in good faith, even if it means you have to admit you were wrong. (Remember that critical thinkers want to see the truth in the situation.) After you have completed this activity, show it to the person you were arguing with to see if you have accurately represented their point of view.

Try to reconstruct the last argument you had with somebody (a supervisor, colleague, friend, or intimate other). Reconstruct the argument from your perspective and that of the other person. Complete the statements below. As you do, watch that you do not distort the other’s viewpoint. Try to enter into it in good faith, even if it means you have to admit you were wrong. (Remember that critical thinkers want to see the truth in the situation.) After you have completed this activity, show it to the person you were arguing with to see if you have accurately represented their point of view.

- – My perspective was as follows (state and elaborate your view)

- – The other person’s view was as follows (state and elaborate their point of view)

Other Stuff:

We were joined for the second half of Tuesday’s class by Thomas Wedell-Wedellsborg, who shared his insights on the importance of reframing questions. Too often we allow a carelessly-framed question to dictate the solution, and end up solving the wrong problem. See his recent article in the Harvard Business Review for more on reframing, and his website/blog for more about Thomas.

I am heading back to Britain next week to give a lecture at Darwin College, Cambridge on Friday, 10th February. Come if you can, and if you can’t, watch out for the video. I’ll aim to post this blog as usual, but thanks in advance for your patience if there is some delay due to travel.

Podcast Details

YouTube iTunes RSS (Android users can enter the link into their podcast client and subscribe directly)

One Comment